Video-enabled education is becoming increasingly popular in support of active learning in CS education. Although present work on both video based learning and flipped classrooms emphasize the necessity for students to view the materials, there is a lack of detailed, objective data on student viewing behaviors. This article aims to use fine grain student log data from TrACE, an asynchronous media platform, to understand student viewing behaviors in three sections of a flipped CS1 course taught by the same instructor. We find that students often have low compliance with video viewing expectations in one section, and that re-watching course content does not often occur. Watching course content earlier has a significant correlation to course performance, and other behaviors correlate when compliance is not enforced via course requirements. These findings highlight concerns for flipped classroom researchers and suggest methods instructors can use to improve student viewing behaviors.

Introduction

The flipped classroom model continues to garner significant interest among both computing educators and STEM educators more generally. Put simply, a flipped class is one where students complete direct initial instruction tasks independently prior to course sessions, with face-to-face class time being reserved for hands-on activities related to the course material for which students have prepared [2, 8]. Discipline-based education conferences, like SIGCSE in computer science and ASEE in engineering, now regularly include multiple reports on practical experiences with flipping (see, e.g. [1, 5, 6, 7, 11]), and entire conferences on the flipped classroom have been formed in recent years. In the majority of both classroom reports and research studies on flipping, students' preparatory work takes the form of viewing web-based video lectures that contain similar content to more traditional face-to-face settings. While we acknowledge that not all flipped courses rely on video viewing as preparatory work, its prevalence motivates our focus here.

Most users of flipping undergird their work with theoretical arguments about active and constructivist learning and/or the practical benefits of time-shifting lecture through video-based instruction. Bishop and Verleger [2] summarize much of the common literature on student-centered learning theories and note the importance of them in the intentional design of the classroom activities. They caution against conceptualizing "the flipped classroom based only on the presence (or absence) of computer technology such as video lectures" [2]. Nonetheless, many authors are quick to highlight intuitive benefits of video lectures like students' ability to work through material at their own pace and review content at a later time [5, 12].

Yet, reports on increased learning gains in flipped classrooms are mixed, and published studies often suffer from multiple confounds which makes interpreting and comparing results difficult [2]. Early work by Day and Foley [3] showed promising gains in computing courses, but recent controlled studies in several STEM disciplines at one institution showed no significant differences between courses utilizing or not-utilizing videos [9, 13].

Studies of flipped classrooms commonly examine artifacts of student performance and attitudinal survey data, but the current literature suffers from a significant missing variable—objective behavioral data about how students actually interact with the online course content. Instructors generally assume that students watch videos as assigned and may use entrance quizzes at the start of class to further incentivize this behavior [7], but instructors are often left wondering to what degree students interacted with the materials.

In this paper, we seek to address this gap by providing empirical evidence related to common assumptions about students' viewing behaviors during a flipped CS1 class over the course of a full semester. Further, we examine how instructional and technological changes introduced over three iterations of the course impact student viewing behaviors. Specifically, we address the following research questions:

- [RQ1] To what extent do students actually prepare for class by viewing videos in advance?

- [RQ2] To what extent do students revisit course content?

- [RQ3] Which viewing behaviors correlate with course performance metrics?

- [RQ4] How do student viewing behaviors vary with sociotechnical changes in the class?

In the remainder of this paper we first provide a brief overview of recent reports of flipping in CS classrooms. We then detail specific aspects of the course and study methodology used here. Next, we outline the results of our data collection over three semesters, followed by a broader discussion of our findings relative to instructional changes. We conclude by examining implications of our work for both classroom practice and research on flipping.

Related Work

Research on implementations of flipped classrooms is plentiful, but student viewership trends and their potential effects on flipped classroom success is still relatively unexplored. Due to the nature of many flipped classrooms, watching videos prior to class is necessary to effectively learn the material and be prepared for the assigned tasks [11]. If a significant portion of students come unprepared for class, the instructor may be forced to spend more time reviewing the lecture material, taking away activity time [7]. Otherwise group work will be less productive as some students will not have the requisite knowledge. Gannod et al. [5] pose a number of implementation strategies for maintaining student viewership, as this is considered a precursor to productive flipped classes.

Even though student preparation is widely recognized as being essential to student success in flipped classrooms, studies generally rely on student self-reports or do not consider the possibility of students not preparing for class in their research. Gehringer, in an implementation of a flipped classroom [6], reported that instructors thought students generally came to class prepared, likely due to a pre-quiz forcing students to keep up with material. However, students self-reported that they watched on average 11.6 out of 25 total videos (46%). Lacher and Lewis attempted to measure the legitimacy of quizzes as a measure of preparedness [7], but ultimately did not find evidence that entry quizzes improved learning outcomes. Lockwood and Esselstein, in another flipped class implementation, were worried that students' tendency to not complete assigned reading before class may carry over to lecture videos in the flipped setting [10]. They found that 70% of students reported having "almost always" watched the assigned videos before class, though students needed to get used to coming prepared.

Not only do students in several of these studies report low compliance to viewing videos, but self-reported measurements often suffer from recollection biases. This leaves one wondering if at least some of the conflicting findings in studies of learning gains in flipped classes stem from a lack of consistent data about student preparation. Not only is this an important variable for research, but it also would serve as a key piece of formative data for instructors prior to the start of in-class activities. In this study we aim to provide such objective data and examine its relationship to student performance indicators.

Method and Data Collection

• Course Structure

The course studied in this paper is a typical 15-week, objects-late CS1 course covering introductory Java concepts and syntax for data-types, conditionals, loops, methods, etc. At the time data collection began, the instructor (the third author) had flipped the course for 1.5 years, and we examined data from three consecutive semesters (Fall 2014, Spring 2015, and Fall 2015 in North American vernacular). Students in this course come from a variety of majors. Basic college algebra is a prerequisite for the course, and students enrolled in the flipped section were required to own laptops which they had to bring to class for programming activities.

Across all semesters, the structure of the course remained largely the same and video lectures were unchanged. Students were assigned 25 videos to watch via the Web prior to coming to class during the semester; see Table 1 for summary data about the videos used. The 75-minute course period was divided between a brief summary lecture at the beginning of class (≈20 minutes), in-class activities (≈40 minutes), and a daily quiz distributed at the end of class (≈15 minutes). Summary lectures highlighted key takeaways from the video and were used to provide additional examples motivating the day's activities. The in-class activities included a mixture of reviewing the previous class' quiz, working through small programs as a class, in groups or individually, and taking time to explain concepts of the next programming assignment.

• Trace and Class Integration

In course offerings prior this study, students enrolled in the flipped CS1 course were instructed to prepare for class by viewing video lectures through the university's learning management system. During the study period, students used a new collaborative streaming platform called TrACE for this purpose. TrACE provides basic video playback functionality alongside affordances for students (and instructors) to add asynchronous discussions within a video at given times and physical locations [4]. Students are thus able to ask and answer questions or engage in other sense-making activities while completing their class preparations. Additionally TrACE collects fine-grained log data about students' interactions with the system. This allows for examining viewing patterns at both the individual and class level.

Instructors using TrACE can take advantage of additional features to further integrate the system into their course. Due dates can be assigned to each video, which then allows TrACE to remind students of which videos to watch upon logging in. Instructors can insert special discussion types that automatically pause the playback interface for students and pose a free-response question. Lastly, the system also includes a basic analytics dashboard that allows instructors to investigate student viewing and posting behaviors on videos.

TrACE integration with the CS1 class evolved over the study period. During the Fall'14 term, it was used primarily as a delivery mechanism for the lecture videos instead of the LMS, with the intent that the instructor would be able to gain some insight into viewing behaviors via the analytics. There were no graded requirements for watching or otherwise interacting with the videos. For the following term (Spring'15), the instructor placed the following self-reflection question near the end of videos to encourage more student engagement, but interaction was not graded initially:

"Do you feel you have a good understanding of the material in this lecture? If not, what topic specifically would you like more information about? If yes, what would you feel comfortable explaining to someone else?"

Around mid-term (ie., week 6, video 13), the instructor instituted a new policy that required students to add at least one post or reply to each video as part of a course participation grade. This policy was in place for the full duration of the Fall'15 academic term, and grading weights were adjusted to increase the emphasis on in-class work over quiz marks. This was intended to force the students to use the material they had watched, thus encouraging them to make sure to watch and interact with the lectures before coming to class. We will revisit these course changes in the discussion section as they relate to research question four.

• Data Collection and Processing

At the beginning of each semester, students enrolled in each of the three courses were given the opportunity to participate in this research study. For those consenting to participate, we analyzed two primary data sources: naturally occurring course performance metrics collected during the term (homework, exam, and course grades) and behavioral traces captured during their use of TrACE. Students enrolled in the course but who did not grant consent still had access to all system features, but their data was excluded from the analysis here. Out of the 30, 20, and 28 students in the Fall'14, Spring'15, and Fall'15 semesters respectively, 23, 19, and 24 students opted to participate in the study.

Fine-grained log data for each student was parsed into sessions, wherein an individual session represents an instance of a student opening a particular video in a course. A session thus encapsulates all actions from that point until the student left the video page or the browser connection timed out. In order to account for misclicks leading to seconds-long sessions on an unintended video, we then filtered out all empty sessions where no student actions were recorded beyond opening and closing the video. Further, as we were interested in the behaviors of students who would watch every lecture video, only those who completed the full course were included. We filtered out data from those who dropped the course early or failed the course due to academic dishonesty. 4, 2, and 1 students were left out for these reasons in the Fall'14, Spring'15, and Fall'15 semesters respectively. After removing data from those who opted out of the study and those who were filtered out, our dataset included data from 75.6% of students from all semesters.

The filtered set of sessions was then used to calculate various metrics for student interactions on videos in the course including data about content coverage and viewing punctuality. We will discuss the details of each of these metrics alongside the data in the following section.

Results

Results in this section are structured around the first three research questions (RQ1-RQ3) posed in the introduction.

• Student Preparation (RQ1)

We examine students' preparation for the flipped class sessions using three primary metrics: videos viewed, coverage, and punctuality. Videos viewed examines the tendency of students to open videos at all. Coverage statistics measure the percentage of each video played by a student. Punctuality deals with the time at which students accessed the content for the first time, with the hope being that students accessed content prior to the assigned class period. Table 2 compares differences between averages for these values in each course. We present results of these analyses in turn.

The first of these metrics, videos viewed, is the percentage of videos accessed by students out of the 25 videos in the course. This is a common measure of compliance reported in several other studies [6, 10], but here it is computed from objective log file data rather than self-reports. Figure 1a presents this viewing percentage over time for each video in the term. Shaded regions represent pairs of videos due on a single class day. For every class, the first video is very high, with over 90% of the class watching it. A pronounced decreasing trend in viewing is apparent in Fall'14, though Spring'15 and Fall'15 remain fairly high throughout the semester, with some videos having a 100% access rate.

A one-way ANOVA showed statistically significant differences in video viewing between the groups (F(2, 56) = 36.40, p < 0.001) (see Table 2). Post-hoc, pair-wise analysis with Bonferroni correction identified Fall'14 students as accessing significantly fewer videos than either Spring'15 (p < 0.001) or Fall'15 (p < 0.001). However, there was not a significant difference between Spring'15 and Fall'15.

An obvious limitation of the videos viewed metric is that it simply provides a binary measure of whether a student has accessed a video, and does not account for how much of a video was actually watched during a session. Thus we define content coverage as the proportion of each video actually played by the student. It is computed using data about play-head positioning when various actions take place within a session (e.g., play, pause, seek, etc.).1 For each student we computed the average coverage across all 25 videos and then a class average from these partial averages, which can be seen in Table 2.

As shown in Figure 1b, coverage is uniformly lower than the simple videos viewed values, as might be expected. Coverage for Fall'14 roughly mirrors trends in video viewing percentage with a steady decline in coverage over the semester. However, there seem to be differences between Spring'15 and Fall'15 that were not as noticeable when looking at video viewing alone. Spring'15 has lower coverage in the first half of the term but jumps up at video 12, one video before the introduction of the new course requirement to contribute one post per video.

A one-way ANOVA showed statistically significant differences in coverage between the groups (F(2, 56) = 22.78, p < 0.001) (see Table 2). In post-hoc analysis, Fall'14 had significantly lower coverage values in comparison to both Spring'15 (p < 0.001) and Fall'15 (p < 0.001). However, there was no statistically significant difference between Spring'15 and Fall'15, despite an apparent visual distinction for the early videos in the courses in Figure 1b.

Looking at the data from a different perspective, we define punctuality as a measure of the first time a student viewed or interacted with some portion of a video relative to the start of class.2 Figure 2 shows average student punctuality in hours for each lecture video in each course. Zero hours on the y-axis represents the start of class, with positive values indicating the video was watched before class, and negative values after class. Students in the Fall'14 semester almost exclusively viewed each video after class beyond the first four videos. This is drastically different compared to the average punctuality of Spring'15 and Fall'15. Students in the Spring'15 semester viewed all but three videos prior to class on average, and average punctuality for Fall'15 students was always positive even when considering one standard deviation from the mean.

A one-way ANOVA analysis showed statistically significant differences in average punctuality between the groups (F(2, 55) = 5.074, p = 0.009) (see Table 2). A post-hoc pair-wise analysis using Bonferroni correction showed that Fall'15 students viewed videos significantly earlier than Fall'14 (p = 0.008). Although there appears to be modest differences between Spring'15 and the other two semesters in Figure 2, these differences are not statistically significant.

At a high level, there were clear differences in students' compliance with course expectations to view video content prior to the start of face-to-face class sessions, and Fall'15 students exhibited behaviors closest to the ideal. We revisit possible reasons for these differences relative to sociotechnical changes in the course in the discussion section.

• Revisiting Course Content (RQ2)

A commonly cited benefit of using videos in the flipped environment is that students can revisit the material as needed. To investigate the extent to which students actually revisit content, we analyzed the number of non-empty sessions generated by each student for each video. There were statistically significant differences between the courses (F(2, 56) = 13.27, p < 0.001) where, on average, Fall'14 students viewed videos 0.70 (σ = 0.61) times, Spring'15 students viewed videos 1.47 (σ = 1.03) times, and Fall'15 students viewed videos 2.11 (σ = 0.93) times.

To visualize revisiting trends, Figure 3 shows the mean number of visits per student for each video and course. The ranges of averages in the Fall'14 and Spring'15 semesters are smaller than that of Fall'15, where a downward trend is apparent over the term. All videos except two in the Fall'14 semester have means below 1, also demonstrating the low compliance that term. Spring'15 has averages mostly between 1 and 2, and Fall'15 has higher averages than both courses for most of the term until around video 18. We posit that the large drop off of viewing at video 18 in Fall'15 may be from students re-watching less due to the freshness of material from the end of the course, though the further exploration of this is beyond the scope of this analysis.

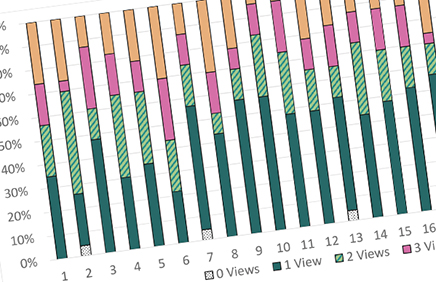

Limiting our analysis to the latter two terms, where students were markedly more compliant with course viewing policies, we were still surprised to observe that the proportion of students revisiting videos was lower than expected. Figure 4 shows the distribution of students visiting each video 0, 1, 2, 3, and 4 or more times during Fall'15. In Fall'15, only 11 of 25 videos were multiply accessed by a majority of students, and in Spring'15 only 2 of the 25 videos were multiply accessed by a majority of students. In other words, most students tend to access most videos only once or not at all, even when they exhibit positive behaviors with respect to content viewing deadlines.

Overall, students do not appear to be revisiting content with great regularity even in instances where they view content initially on time. However, there were clear cases of individual students who accessed videos many times and may thus skew class averages significantly. For example in Fall'15, the maximum revisits on a video for a single student was 20, and the maximum average across all videos for a single student was 5.08. Admittedly, one limitation of our analysis here is that we do not attempt to evaluate the quality of the revisit interaction nor the time lapse between visits to a video, but we see these as potential future extensions that could shed additional light on these behaviors.

• Correlations with Performance (RQ3)

To investigate the relationship between students' behaviors and their course performance, we employ non-parametric statistics due to the non-normality of grade distributions. Despite clear differences in viewing behaviors, a preliminary Kruskal-Wallis test found no statistically significant differences in grade distributions between the courses.

Table 3 shows the Spearman correlation coefficients between the various student behavior metrics and final course grades for each course separately. In Fall'14, videos viewed, coverage, punctuality and revisiting all positively correlated significantly with course grade, while only punctuality exhibited a statistically significant positive relationship across all semesters.

It is interesting to note that the course with the lowest overall compliance (Fall'14) with video watching had the largest number of significant indicators of performance. Put another way, when students are not adhering to course preparation, those that do voluntarily engage with content are likely to also perform well in the class. However, when students are more uniformly compliant with viewing (as in Spring'15 and Fall'15), many of these co-variates fall away. At the very least, this suggests that there is a strong need to quantitatively control for differences in viewing behaviors when conducting analyses of students' performance in flipped courses.

Discussion

Per the research questions posed, there are a number of important takeaways about student viewing behaviors in flipped classes:

- With regard to the question of student preparation in flipped classes (RQ1), we saw marked differences between courses. While Fall'15 students' behaviors closely approximated an instructor ideal—viewing all videos well in advance of class—students from Fall'14 were highly sporadic in viewing and generally watched videos after the class period where they were assigned. Viewing is not guaranteed and should not be assumed.

- Although a common benefit of using web-based video is the persistence of lecture material for later review, we found that students infrequently did so even when initial viewing compliance was high (RQ2). The reality is that students may not give persistence of videos the same value that instructors believe they do.

- Students who voluntarily watch all videos (and do so earlier and more completely) tend to perform better in the course (RQ3). However, when compliance with viewing prior to class is generally high in a course, only the punctuality measure significantly correlates with student performance.

Given that we observed such stark differences between the three terms under study, it is natural to ask whether these differences were just due to changes in the students enrolled each term or whether there was something different about the course design that might explain things (and thus be adopted by other flipped course instructors).

• Examining Course Differences (RQ4)

In examining the differences between courses, we first consider Fall'14. During that term, the instructor simply transferred his earlier videos into the TrACE system, but did not alter course expectations despite having greater abilities to monitor student interactions. In this sense, Fall'14 is a more typical flipped class, and viewing rates of less than 50% resemble self-reported data from earlier studies [6, 10].

Fall'15 students demonstrated considerably more desirable behaviors and watched the vast majority of video content early. We noted three key differences as compared to the course a year earlier:

- The instructor uniformly required students to post at least one video-related comment in each video as part of the participation grade, and he gave students a structured reflection prompt at the end of each video if they had not already posted something earlier.

- The playback interface in TrACE actively provided unobtrusive feedback to students about which video segments had (and had not) been viewed. This feature had previously not been available.

- Starting about 1 month into the course, TrACE began automatically reminding students to view content due in the next 48 hours along with overdue content if it had not been previously accessed.

While it is hard to say exactly which of these changes had the most influence, there are indications that the sociotechnical system made a difference. For example, the first major shift in student behaviors seemed to occur midway through Spring'15 around video 12. This nearly coincides with video 13 where the instructor instituted the new posting policy that term. Following the change in Spring'15, students were even more compliant with viewing requirements.

Evidence in support of the TrACE changes also was present. On average, students in Fall'15 were watching videos 34.6 hours in advance of class, which roughly falls within the 48 hour email reminder threshold introduced that term. Taken together, we see significant potential for these features to encourage advance preparation among students in flipped courses, but more evidence is needed to quantitatively examine these impacts in other courses and qualitatively understand their role on student attitudes.

• Limitations and Future Work

While the results of this study provide new empirical evidence that raises questions about common instructor intuitions, we recognize some inherent limitations of our data that suggest new opportunities for further work. First, our statistical analyses are somewhat limited by relatively small sample sizes as a result of the course sizes at this institution. At this point it would be tenuous to attempt generalizing findings from this study broadly to all instances of flipped courses in STEM disciplines. Our findings related to objective student behaviors should be taken as suggestive, and further replication studies with larger classes in other contexts would help tease out nuanced variations. In addition, we did not have access to demographic data given the nature of our human subjects approval, and future studies with larger sample sizes may also be interested in exploring claims about demographic data and student behaviors.

Further, we acknowledge there is a fundamental limitation with log-file data, such as that provided by TrACE. It is easy to compute accesses to videos and proportions of content played in the browser, but it is non-trivial to determine whether a student actually paid attention to the video as it played. Our use of "viewing" and "coverage" is thus necessarily coarse and errs on the side of inclusion. In follow-up work, there is an opportunity to model student interactions within a video to classify different forms of engagement during viewing. This may provide more detailed knowledge about which types of interactions matter most.

Lastly, a finer grained analysis of revisiting behaviors that accounts for elapsed time and the specific nature of the revisit is necessary. Coupling this type of log-data with interview data from students may also give us new insight into why students choose to revisit (or not).

Conclusion

The flipped course pedagogy continues to be a popular topic of discussion among educators, however many of our intuitions about how students prepare for face-to-face class periods are not tied to objective evidence. This paper contributes to our understanding of flipped classes by providing empirical log data about student interactions with videos in a CS1 course. Further, it helps us understand how subtle changes in instructor expectations within a flipped course can have dramatic changes in student behaviors.

As educators, the data presented makes it clear that simply expecting students to prepare for class by watching videos is unlikely to yield compliance, and the majority of students do not often take advantage of the potential to review video content in flipped classes. However, adding something as small as a reflection prompt to each video and requiring students to complete it prior to class seems to increase both content coverage and punctuality metrics. Further, viewing rates here appeared higher than in previously reported studies utilizing entrance quizzes to encourage preparation. Put simply, students need an integrated reason to engage with the material and instructors may benefit from being more aware of their actions.

Our results also suggest that the research community investigating the efficacy of flipped classrooms is missing an important variable. The need to capture objective data about student preparation and control for its effect on student performance is clear. By incorporating such multivariate data into the analysis of flipped classes we may be able to better understand contextual factors leading to success and better transfer one educator's findings to new courses.

Acknowledgements

This work is funded in part by the National Science Foundation under grant IIS-1318345. Any opinions, findings, and conclusions, or recommendations expressed in this material are those of the authors and do not necessarily reflect the views of the NSF.

References

1. A. Amresh, A. R. Carberry, and J. Femiani. Evaluating the effectiveness of flipped classrooms for teaching CS1. In IEEE Frontiers in Education 2013, pages 733–735, 2013.

2. J. L. Bishop and M. A. Verleger The flipped classroom: A survey of the research. In ASEE National Conference Proceedings, Atlanta, GA, 2013.

3. J. A. Day and J. D. Foley Evaluating a web lecture intervention in a human-computer interaction course. IEEE Trans. on Education, 49(4):420–431, 2006.

4. B. Dorn, L. B. Schroeder, and A. Stankiewicz. Piloting trace: Exploring spatiotemporal anchored collaboration in asynchronous learning. In CSCW'15: Proceedings; of the 18th ACM Conference on Computer Supported Cooperative Work & Social Computing, pages 393–403, 2015.

5. G. C. Gannod, J. E. Burge, and M. T. Helmick. Using the inverted classroom to teach software engineering. In ICSE '08: Proceedings of the 30th international conference on Software engineering, pages 777–786, 2008.

6. E. F. Gehringer and B. W. Peddycord III. The inverted-lecture model: a case study in computer architecture. In SIGCSE '13: Proceeding of the 44th ACM technical symposium on Computer science education, pages 489–494, 2013.

7. L. L. Lacher and M. C. Lewis. The effectiveness of video quizzes in a ipped class. In SIGCSE'15: Proceedings of the 46th ACM Technical Symposium on Computer Science Education, pages 224–228, 2015.

8. M. J. Lage, G. J. Platt, and M. Treglia. Inverting the classroom: A gateway to creating an inclusive learning environment. J. Economic Education, 31(1):30–43, 2000.

9. N. Lape, R. Levy, D. Yong, K. Haushalter, R. Eddy, and N. Hankel. Probing the inverted classroom: A controlled study of teaching and learning outcomes in undergraduate engineering and mathematics. In ASEE National Conference Proceedings, 2014.

10. K. Lockwood and R. Esselstein. The inverted classroom and the CS curriculum. In SIGCSE'13: Proceedings of the 44th ACM Technical Symposium on Computer Science Education, pages 113–118. ACM, 2013.

11. M. L. Maher, C. Latulipe, H. Lipford, and A. Rorrer. Flipped classroom strategies for cs education. In SIGCSE'15: Proceedings of the 46th ACM Technical Symposium on Computer Science Education, pages 218–223, 2015.

12. M. Ronchetti. Video-lectures over Internet. In G. Magoulas, editor, E-Infrastructures and Technologies for Lifelong Learning: Next Generation Environments, pages 253–270. Information Science Reference, Hersey, PA, 2011.

13. D. Yong, R. Levy, and N. Lape. Why no difference? A controlled flipped classroom study for an introductory differential equations course. Problems, Resources, and Issues in Mathematics Undergraduate Studies, 25(9-10):907–921, 2015.

Authors

Suzanne L. Dazo

University of Nebraska at Omaha

[email protected]

Nicholas R. Stepanek

University of Nebraska at Omaha

[email protected]

Robert Fulkerson

University of Nebraska at Omaha

[email protected]

Brian Dorn

University of Nebraska at Omaha

[email protected]

Footnotes

1. Videos not viewed by a student have a coverage of 0.

2. The punctuality metric excludes students who never watched the video, as it cannot be computed for them.

Figures

Figure 1. Viewing and Coverage Metrics by Video (X-Axis)

Figure 1. Viewing and Coverage Metrics by Video (X-Axis)

Figure 2. Average student punctuality in hours (Y) per lecture video (X)

Figure 2. Average student punctuality in hours (Y) per lecture video (X)

Figure 3. Average student visits (Y) per video (X)

Figure 3. Average student visits (Y) per video (X)

Figure 4. Visiting proportions for Fall 2015 students

Figure 4. Visiting proportions for Fall 2015 students

Tables

Table 1. Descriptive Information on Course Videos

Table 1. Descriptive Information on Course Videos

Table 2. Mean Viewing Statistics with Std. Deviations (σ)

Table 2. Mean Viewing Statistics with Std. Deviations (σ)

Table 3. Spearman correlation coefficients for behavioral data and course grade

Table 3. Spearman correlation coefficients for behavioral data and course grade

Copyright held by the owner/author(s). Publication rights licensed to ACM.

The Digital Library is published by the Association for Computing Machinery. Copyright © 2016 ACM, Inc.

Contents available in PDF

PDFView Full Citation and Bibliometrics in the ACM DL.

To comment you must create or log in with your ACM account.

Comments

There are no comments at this time.